Our latest published education policy analysis looks at the federal Shadow Minister for Education, Tanya Plibersek’s proposal to raise the required marks for teaching degrees to the top 30% of school leavers. We argue that this is not just wrongheaded, but regressive, as it will shut out more people from diverse socio-economic, cultural and language backgrounds from the teaching profession. Sign up for a free trial at Crikey to read the finished piece, or read our draft here:

As Federal Shadow Minister for Education Tanya Plibersek dared universities to defy her plan to raise the required minimum Australian Tertiary Admission Rank (ATAR) score for graduating high school students aspiring to become teachers, countless practicing teachers around the country shook our heads in disbelief and disgust over her disingenuous political posturing.

There is no way Plibersek could be under any illusion that there is a research basis to this policy. For someone apparently so committed to academic standards, she has not offered a shred of evidence that raising the required marks for teaching degrees to the top 30% of school leavers will actually improve the quality of teaching in schools. Moreover, when the Australian Council of Deans of Education respectfully explained that there is no such evidence, she refused to back down, hitting back with the school yard taunt “try me”.

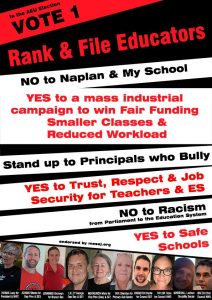

Even more disappointing is that President of the Australian Education Union (AEU) Correna Haythorpe rushed in to give her public endorsement of Plibersek’s shameful policy. Teachers see straight through Labor’s adversarial blame game and are fed up with AEU leadership for failing to stand up for policies that genuinely support teachers and improve our education system.

The fixation on teacher quality as a solution to Australia’s declining results in international standardised tests – such the OECD’s Program for International Student Assessment (PISA) – is in itself wrongheaded. It overlooks strong evidence of the persistent link between students’ socio-economic status and performance on standardised tests. A 2017 report on Australia’s PISA results showed students from the highest quartile of socioeconomic background perform on average 3 year levels higher than students from the lowest quartile.

Thus, if Labor are so concerned about the declining test results of Australian school students, why don’t they turn their attention to developing policies that address the growing inequality in Australian society and socio-economic stratification in our school system?

Then, if they still want to talk about improving teacher quality, why not talk to teachers about what is holding us back from providing a first-class education for our students? Research has consistently found excessive workloads to be amongst the top reasons why so many teachers leave the profession after just a few years of practice. Insufficient funding is the main cause of staff and resource shortages in our schools, which lead to teachers being overburdened with administrative duties.

Trends towards an ever-increasing focus on standardised tests and punitive monitoring of teacher performance have also proven extremely demotivating for teachers. These trends devalue the proven importance of teacher-student relationships to learning and constrain us from using our professional judgement to devise targeted learning programs for our students.

Addressing the real, pressing issues of teachers would go a long way towards attracting the best possible candidates to teaching degrees. Yet, ironically, through policies that place undue emphasis on teacher “quality”, Labor politicians have been key instigators of teacher bashing in the media, tacitly licencing parents and students to treat teachers with disrespect. Is this how they expect to make teaching a more appealing career choice for talented graduates?

There is even more sad irony in the fact that this policy is based on a concept that both research and teacher experience tell us is false – that a person’s capacity to learn the skills required for teaching can be determined by their ATAR score. In fact, the specific academic skills imperfectly tested in high-stakes Year 12 exams are only a small part of a much broader skillset required for teaching.

It is the proper role of teacher educators, not politicians, to determine prospective students’ suitability to fulfill the requirements of a teaching degree. Most teacher training courses now require prospective students to complete a range of assessments as part of their selection process, from general intellectual aptitude and social capabilities tests to personal statements about their motivations for pursuing teaching as a profession.

While these measures may not be perfect, they better reflect the breadth of what it takes to be a teacher and the reality that the best teachers will often come to the profession after fulfilling careers in other industries, bringing with them the immeasurable benefits of varied life experiences.

The most disturbing aspect of Labor’s policy is that higher ATAR score requirements for teaching courses would reinforce the language and cultural bias of the education system. ATAR scores, as much as standardised test results, sort students according to class and cultural background. Thus, the people most likely to be shut out from the teaching profession are those with language backgrounds other than English and those who have experienced socio-economic disadvantage.

With much evidence to suggest that students learn better when their teachers come from a similar cultural background, it is sad to see Labor promoting a policy that will make it even harder to increase the cultural and linguistic diversity of the teaching profession.

Labor finds it easier to blame universities for their overenrolled teaching courses than to create policies that alleviate the pressures they now face from massive funding cuts. It prefers to blame teachers for declining test results rather than accept the real socio-economic reasons for this decline.

It’s time for Labor to provide adequate funding to support disadvantaged students and look to build a teacher workforce that enables schools (and universities) to reflect the cultural diversity of the communities we serve. Teachers need the AEU to support us in holding Labor to account so we can achieve the best possible education system for our students.